Read More Creating Your First Chat GPT Plugin: A Step-by-Step Guide

Read More AVIF and WebP: Two next-generation Image Formats in Comparison

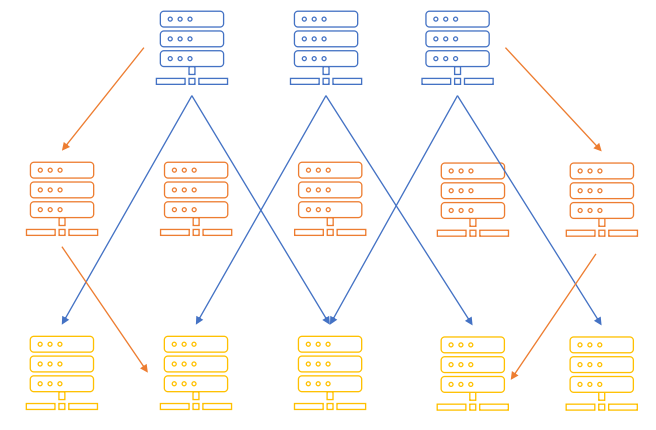

Read More Three distributed File Systems for High-Performance Computing

Read More TensorFlow 2 - What is new and what are the Highlights

Read More Bitcoin and Ethereum in Comparison: All you need to know and what's the Difference

Read More Put to Test: PaddleOCR Engine Example and Benchmark

Read More PaddlePaddle – The little known Game Changer in Deep Learning from far East